BBC Radio 5 live’s award winning gaming podcast, discussing the world of video games and games culture.

…

continue reading

Player FM - Internet Radio Done Right

11 subscribers

Checked 3h ago

three 年前已添加!

内容由LessWrong提供。所有播客内容(包括剧集、图形和播客描述)均由 LessWrong 或其播客平台合作伙伴直接上传和提供。如果您认为有人在未经您许可的情况下使用您的受版权保护的作品,您可以按照此处概述的流程进行操作https://zh.player.fm/legal。

Player FM -播客应用

使用Player FM应用程序离线!

使用Player FM应用程序离线!

“My theory of change for working in AI healthtech” by Andrew_Critch

Manage episode 445268258 series 3364760

内容由LessWrong提供。所有播客内容(包括剧集、图形和播客描述)均由 LessWrong 或其播客平台合作伙伴直接上传和提供。如果您认为有人在未经您许可的情况下使用您的受版权保护的作品,您可以按照此处概述的流程进行操作https://zh.player.fm/legal。

This post starts out pretty gloomy but ends up with some points that I feel pretty positive about. Day to day, I'm more focussed on the positive points, but awareness of the negative has been crucial to forming my priorities, so I'm going to start with those. It's mostly addressed to the EA community, but is hopefully somewhat of interest to LessWrong and the Alignment Forum as well.

My main concerns

I think AGI is going to be developed soon, and quickly. Possibly (20%) that's next year, and most likely (80%) before the end of 2029. These are not things you need to believe for yourself in order to understand my view, so no worries if you're not personally convinced of this.

(For what it's worth, I did arrive at this view through years of study and research in AI, combined with over a decade of private forecasting practice [...]

---

Outline:

(00:28) My main concerns

(03:41) Extinction by industrial dehumanization

(06:00) Successionism as a driver of industrial dehumanization

(11:08) My theory of change: confronting successionism with human-specific industries

(15:53) How I identified healthcare as the industry most relevant to caring for humans

(20:00) But why not just do safety work with big AI labs or governments?

(23:22) Conclusion

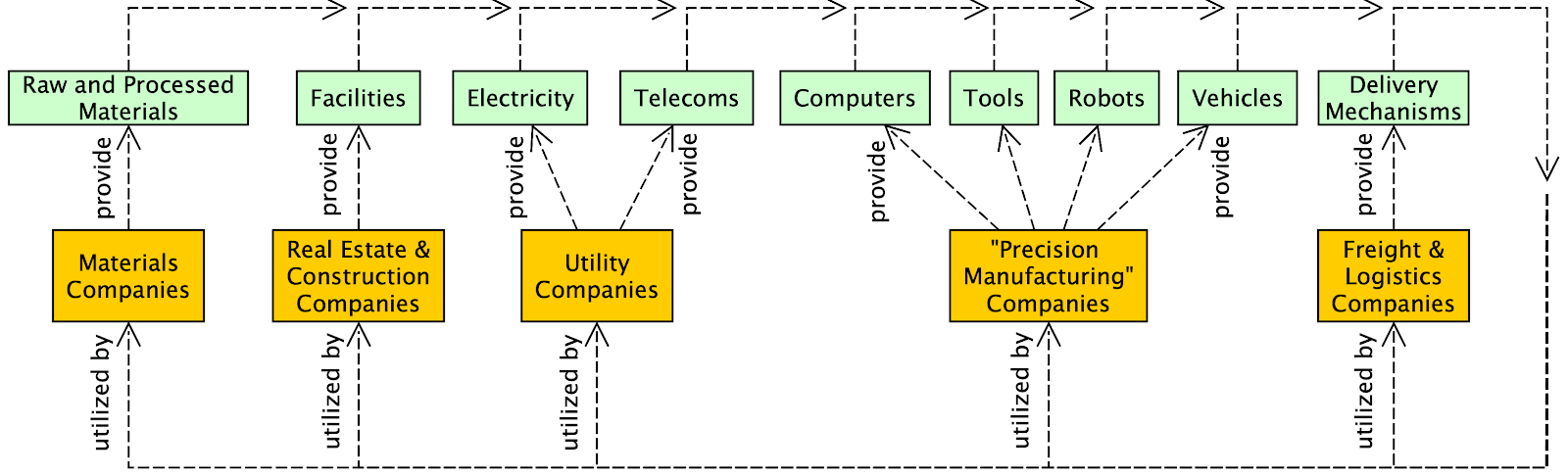

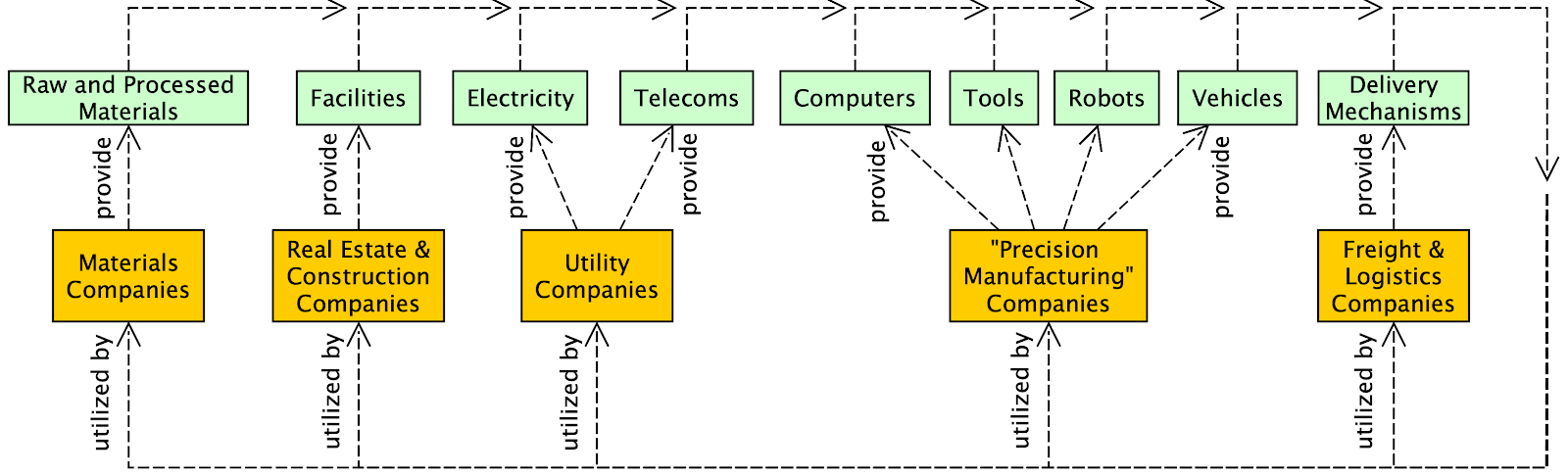

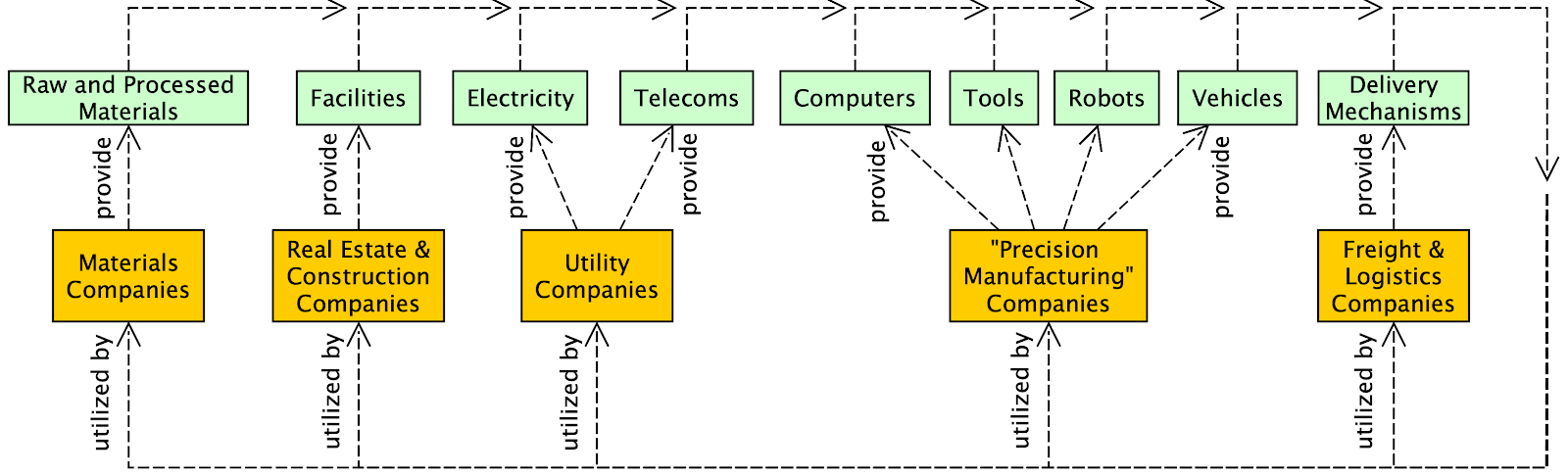

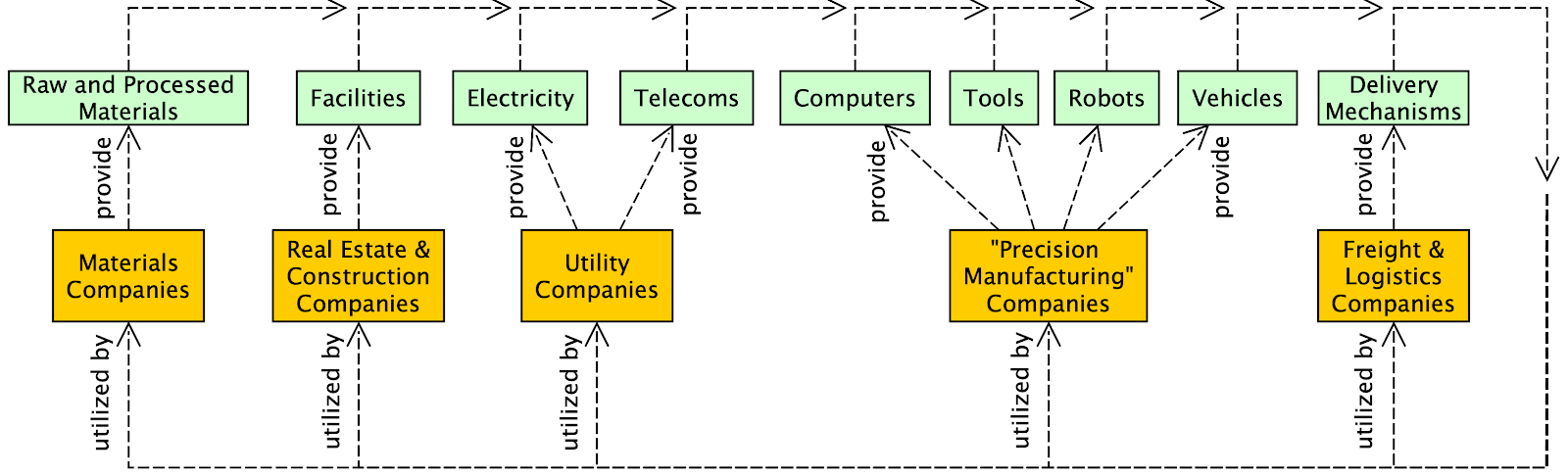

The original text contained 1 image which was described by AI.

---

First published:

October 12th, 2024

Source:

https://www.lesswrong.com/posts/Kobbt3nQgv3yn29pr/my-theory-of-change-for-working-in-ai-healthtech

---

Narrated by TYPE III AUDIO.

---

…

continue reading

My main concerns

I think AGI is going to be developed soon, and quickly. Possibly (20%) that's next year, and most likely (80%) before the end of 2029. These are not things you need to believe for yourself in order to understand my view, so no worries if you're not personally convinced of this.

(For what it's worth, I did arrive at this view through years of study and research in AI, combined with over a decade of private forecasting practice [...]

---

Outline:

(00:28) My main concerns

(03:41) Extinction by industrial dehumanization

(06:00) Successionism as a driver of industrial dehumanization

(11:08) My theory of change: confronting successionism with human-specific industries

(15:53) How I identified healthcare as the industry most relevant to caring for humans

(20:00) But why not just do safety work with big AI labs or governments?

(23:22) Conclusion

The original text contained 1 image which was described by AI.

---

First published:

October 12th, 2024

Source:

https://www.lesswrong.com/posts/Kobbt3nQgv3yn29pr/my-theory-of-change-for-working-in-ai-healthtech

---

Narrated by TYPE III AUDIO.

---

Images from the article:

Apple Podcasts and Spotify do not show images in the episode description. Try Pocket Casts, or another podcast app.

Apple Podcasts and Spotify do not show images in the episode description. Try Pocket Casts, or another podcast app.

Apple Podcasts and Spotify do not show images in the episode description. Try Pocket Casts, or another podcast app.

Apple Podcasts and Spotify do not show images in the episode description. Try Pocket Casts, or another podcast app.498集单集

Manage episode 445268258 series 3364760

内容由LessWrong提供。所有播客内容(包括剧集、图形和播客描述)均由 LessWrong 或其播客平台合作伙伴直接上传和提供。如果您认为有人在未经您许可的情况下使用您的受版权保护的作品,您可以按照此处概述的流程进行操作https://zh.player.fm/legal。

This post starts out pretty gloomy but ends up with some points that I feel pretty positive about. Day to day, I'm more focussed on the positive points, but awareness of the negative has been crucial to forming my priorities, so I'm going to start with those. It's mostly addressed to the EA community, but is hopefully somewhat of interest to LessWrong and the Alignment Forum as well.

My main concerns

I think AGI is going to be developed soon, and quickly. Possibly (20%) that's next year, and most likely (80%) before the end of 2029. These are not things you need to believe for yourself in order to understand my view, so no worries if you're not personally convinced of this.

(For what it's worth, I did arrive at this view through years of study and research in AI, combined with over a decade of private forecasting practice [...]

---

Outline:

(00:28) My main concerns

(03:41) Extinction by industrial dehumanization

(06:00) Successionism as a driver of industrial dehumanization

(11:08) My theory of change: confronting successionism with human-specific industries

(15:53) How I identified healthcare as the industry most relevant to caring for humans

(20:00) But why not just do safety work with big AI labs or governments?

(23:22) Conclusion

The original text contained 1 image which was described by AI.

---

First published:

October 12th, 2024

Source:

https://www.lesswrong.com/posts/Kobbt3nQgv3yn29pr/my-theory-of-change-for-working-in-ai-healthtech

---

Narrated by TYPE III AUDIO.

---

…

continue reading

My main concerns

I think AGI is going to be developed soon, and quickly. Possibly (20%) that's next year, and most likely (80%) before the end of 2029. These are not things you need to believe for yourself in order to understand my view, so no worries if you're not personally convinced of this.

(For what it's worth, I did arrive at this view through years of study and research in AI, combined with over a decade of private forecasting practice [...]

---

Outline:

(00:28) My main concerns

(03:41) Extinction by industrial dehumanization

(06:00) Successionism as a driver of industrial dehumanization

(11:08) My theory of change: confronting successionism with human-specific industries

(15:53) How I identified healthcare as the industry most relevant to caring for humans

(20:00) But why not just do safety work with big AI labs or governments?

(23:22) Conclusion

The original text contained 1 image which was described by AI.

---

First published:

October 12th, 2024

Source:

https://www.lesswrong.com/posts/Kobbt3nQgv3yn29pr/my-theory-of-change-for-working-in-ai-healthtech

---

Narrated by TYPE III AUDIO.

---

Images from the article:

Apple Podcasts and Spotify do not show images in the episode description. Try Pocket Casts, or another podcast app.

Apple Podcasts and Spotify do not show images in the episode description. Try Pocket Casts, or another podcast app.

Apple Podcasts and Spotify do not show images in the episode description. Try Pocket Casts, or another podcast app.

Apple Podcasts and Spotify do not show images in the episode description. Try Pocket Casts, or another podcast app.498集单集

所有剧集

×L

LessWrong (Curated & Popular)

Back in the 1990s, ground squirrels were briefly fashionable pets, but their popularity came to an abrupt end after an incident at Schiphol Airport on the outskirts of Amsterdam. In April 1999, a cargo of 440 of the rodents arrived on a KLM flight from Beijing, without the necessary import papers. Because of this, they could not be forwarded on to the customer in Athens. But nobody was able to correct the error and send them back either. What could be done with them? It's hard to think there wasn’t a better solution than the one that was carried out; faced with the paperwork issue, airport staff threw all 440 squirrels into an industrial shredder. [...] It turned out that the order to destroy the squirrels had come from the Dutch government's Department of Agriculture, Environment Management and Fishing. However, KLM's management, with the benefit of hindsight, said that [...] --- First published: April 22nd, 2025 Source: https://www.lesswrong.com/posts/nYJaDnGNQGiaCBSB5/accountability-sinks --- Narrated by TYPE III AUDIO . --- Images from the article: Apple Podcasts and Spotify do not show images in the episode description. Try Pocket Casts , or another podcast app.…

L

LessWrong (Curated & Popular)

Subtitle: Bad for loss of control risks, bad for concentration of power risks I’ve had this sitting in my drafts for the last year. I wish I’d been able to release it sooner, but on the bright side, it’ll make a lot more sense to people who have already read AI 2027. There's a good chance that AGI will be trained before this decade is out. By AGI I mean “An AI system at least as good as the best human X’ers, for all cognitive tasks/skills/jobs X.” Many people seem to be dismissing this hypothesis ‘on priors’ because it sounds crazy. But actually, a reasonable prior should conclude that this is plausible.[1] For more on what this means, what it might look like, and why it's plausible, see AI 2027, especially the Research section. If so, by default the existence of AGI will be a closely guarded [...] The original text contained 8 footnotes which were omitted from this narration. --- First published: April 18th, 2025 Source: https://www.lesswrong.com/posts/FGqfdJmB8MSH5LKGc/training-agi-in-secret-would-be-unsafe-and-unethical-1 --- Narrated by TYPE III AUDIO .…

L

LessWrong (Curated & Popular)

Though, given my doomerism, I think the natsec framing of the AGI race is likely wrongheaded, let me accept the Dario/Leopold/Altman frame that AGI will be aligned to the national interest of a great power. These people seem to take as an axiom that a USG AGI will be better in some way than CCP AGI. Has anyone written justification for this assumption? I am neither an American citizen nor a Chinese citizen. What would it mean for an AGI to be aligned with "Democracy" or "Confucianism" or "Marxism with Chinese characteristics" or "the American constitution" Contingent on a world where such an entity exists and is compatible with my existence, what would my life be as a non-citizen in each system? Why should I expect USG AGI to be better than CCP AGI? --- First published: April 19th, 2025 Source: https://www.lesswrong.com/posts/MKS4tJqLWmRXgXzgY/why-should-i-assume-ccp-agi-is-worse-than-usg-agi-1 --- Narrated by TYPE III AUDIO .…

L

LessWrong (Curated & Popular)

1 “Surprising LLM reasoning failures make me think we still need qualitative breakthroughs for AGI” by Kaj_Sotala 35:51

Introduction Writing this post puts me in a weird epistemic position. I simultaneously believe that: The reasoning failures that I'll discuss are strong evidence that current LLM- or, more generally, transformer-based approaches won't get us AGI As soon as major AI labs read about the specific reasoning failures described here, they might fix them But future versions of GPT, Claude etc. succeeding at the tasks I've described here will provide zero evidence of their ability to reach AGI. If someone makes a future post where they report that they tested an LLM on all the specific things I described here it aced all of them, that will not update my position at all. That is because all of the reasoning failures that I describe here are surprising in the sense that given everything else that they can do, you’d expect LLMs to succeed at all of these tasks. The [...] --- Outline: (00:13) Introduction (02:13) Reasoning failures (02:17) Sliding puzzle problem (07:17) Simple coaching instructions (09:22) Repeatedly failing at tic-tac-toe (10:48) Repeatedly offering an incorrect fix (13:48) Various people's simple tests (15:06) Various failures at logic and consistency while writing fiction (15:21) Inability to write young characters when first prompted (17:12) Paranormal posers (19:12) Global details replacing local ones (20:19) Stereotyped behaviors replacing character-specific ones (21:21) Top secret marine databases (23:32) Wandering items (23:53) Sycophancy (24:49) What's going on here? (32:18) How about scaling? Or reasoning models? --- First published: April 15th, 2025 Source: https://www.lesswrong.com/posts/sgpCuokhMb8JmkoSn/untitled-draft-7shu --- Narrated by TYPE III AUDIO . --- Images from the article:…

L

LessWrong (Curated & Popular)

1 “Frontier AI Models Still Fail at Basic Physical Tasks: A Manufacturing Case Study” by Adam Karvonen 21:00

Dario Amodei, CEO of Anthropic, recently worried about a world where only 30% of jobs become automated, leading to class tensions between the automated and non-automated. Instead, he predicts that nearly all jobs will be automated simultaneously, putting everyone "in the same boat." However, based on my experience spanning AI research (including first author papers at COLM / NeurIPS and attending MATS under Neel Nanda), robotics, and hands-on manufacturing (including machining prototype rocket engine parts for Blue Origin and Ursa Major), I see a different near-term future. Since the GPT-4 release, I've evaluated frontier models on a basic manufacturing task, which tests both visual perception and physical reasoning. While Gemini 2.5 Pro recently showed progress on the visual front, all models tested continue to fail significantly on physical reasoning. They still perform terribly overall. Because of this, I think that there will be an interim period where a significant [...] --- Outline: (01:28) The Evaluation (02:29) Visual Errors (04:03) Physical Reasoning Errors (06:09) Why do LLM's struggle with physical tasks? (07:37) Improving on physical tasks may be difficult (10:14) Potential Implications of Uneven Automation (11:48) Conclusion (12:24) Appendix (12:44) Visual Errors (14:36) Physical Reasoning Errors --- First published: April 14th, 2025 Source: https://www.lesswrong.com/posts/r3NeiHAEWyToers4F/frontier-ai-models-still-fail-at-basic-physical-tasks-a --- Narrated by TYPE III AUDIO . --- Images from the article: Apple Podcasts and Spotify do not show images in the episode description. Try Pocket Casts , or another podcast app.…

L

LessWrong (Curated & Popular)

1 “Negative Results for SAEs On Downstream Tasks and Deprioritising SAE Research (GDM Mech Interp Team Progress Update #2)” by Neel Nanda, lewis smith, Senthooran Rajamanoharan, Arthur Conmy, Callum… 57:32

Audio note: this article contains 31 uses of latex notation, so the narration may be difficult to follow. There's a link to the original text in the episode description. Lewis Smith*, Sen Rajamanoharan*, Arthur Conmy, Callum McDougall, Janos Kramar, Tom Lieberum, Rohin Shah, Neel Nanda * = equal contribution The following piece is a list of snippets about research from the GDM mechanistic interpretability team, which we didn’t consider a good fit for turning into a paper, but which we thought the community might benefit from seeing in this less formal form. These are largely things that we found in the process of a project investigating whether sparse autoencoders were useful for downstream tasks, notably out-of-distribution probing. TL;DR To validate whether SAEs were a worthwhile technique, we explored whether they were useful on the downstream task of OOD generalisation when detecting harmful intent in user prompts [...] --- Outline: (01:08) TL;DR (02:38) Introduction (02:41) Motivation (06:09) Our Task (08:35) Conclusions and Strategic Updates (13:59) Comparing different ways to train Chat SAEs (18:30) Using SAEs for OOD Probing (20:21) Technical Setup (20:24) Datasets (24:16) Probing (26:48) Results (30:36) Related Work and Discussion (34:01) Is it surprising that SAEs didn't work? (39:54) Dataset debugging with SAEs (42:02) Autointerp and high frequency latents (44:16) Removing High Frequency Latents from JumpReLU SAEs (45:04) Method (45:07) Motivation (47:29) Modifying the sparsity penalty (48:48) How we evaluated interpretability (50:36) Results (51:18) Reconstruction loss at fixed sparsity (52:10) Frequency histograms (52:52) Latent interpretability (54:23) Conclusions (56:43) Appendix The original text contained 7 footnotes which were omitted from this narration. --- First published: March 26th, 2025 Source: https://www.lesswrong.com/posts/4uXCAJNuPKtKBsi28/sae-progress-update-2-draft --- Narrated by TYPE III AUDIO . --- Images from the article:…

L

LessWrong (Curated & Popular)

This is a link post. When I was a really small kid, one of my favorite activities was to try and dam up the creek in my backyard. I would carefully move rocks into high walls, pile up leaves, or try patching the holes with sand. The goal was just to see how high I could get the lake, knowing that if I plugged every hole, eventually the water would always rise and defeat my efforts. Beaver behaviour. One day, I had the realization that there was a simpler approach. I could just go get a big 5 foot long shovel, and instead of intricately locking together rocks and leaves and sticks, I could collapse the sides of the riverbank down and really build a proper big dam. I went to ask my dad for the shovel to try this out, and he told me, very heavily paraphrasing, 'Congratulations. You've [...] --- First published: April 10th, 2025 Source: https://www.lesswrong.com/posts/rLucLvwKoLdHSBTAn/playing-in-the-creek Linkpost URL: https://hgreer.com/PlayingInTheCreek --- Narrated by TYPE III AUDIO .…

L

LessWrong (Curated & Popular)

This is part of the MIRI Single Author Series. Pieces in this series represent the beliefs and opinions of their named authors, and do not claim to speak for all of MIRI. Okay, I'm annoyed at people covering AI 2027 burying the lede, so I'm going to try not to do that. The authors predict a strong chance that all humans will be (effectively) dead in 6 years, and this agrees with my best guess about the future. (My modal timeline has loss of control of Earth mostly happening in 2028, rather than late 2027, but nitpicking at that scale hardly matters.) Their timeline to transformative AI also seems pretty close to the perspective of frontier lab CEO's (at least Dario Amodei, and probably Sam Altman) and the aggregate market opinion of both Metaculus and Manifold! If you look on those market platforms you get graphs like this: Both [...] --- Outline: (02:23) Mode ≠ Median (04:50) Theres a Decent Chance of Having Decades (06:44) More Thoughts (08:55) Mid 2025 (09:01) Late 2025 (10:42) Early 2026 (11:18) Mid 2026 (12:58) Late 2026 (13:04) January 2027 (13:26) February 2027 (14:53) March 2027 (16:32) April 2027 (16:50) May 2027 (18:41) June 2027 (19:03) July 2027 (20:27) August 2027 (22:45) September 2027 (24:37) October 2027 (26:14) November 2027 (Race) (29:08) December 2027 (Race) (30:53) 2028 and Beyond (Race) (34:42) Thoughts on Slowdown (38:27) Final Thoughts --- First published: April 9th, 2025 Source: https://www.lesswrong.com/posts/Yzcb5mQ7iq4DFfXHx/thoughts-on-ai-2027 --- Narrated by TYPE III AUDIO . --- Images from the article: Apple Podcasts and Spotify do not show images in the episode description. Try Pocket Casts , or another podcast app.…

L

LessWrong (Curated & Popular)

Short AI takeoff timelines seem to leave no time for some lines of alignment research to become impactful. But any research rebalances the mix of currently legible research directions that could be handed off to AI-assisted alignment researchers or early autonomous AI researchers whenever they show up. So even hopelessly incomplete research agendas could still be used to prompt future capable AI to focus on them, while in the absence of such incomplete research agendas we'd need to rely on AI's judgment more completely. This doesn't crucially depend on giving significant probability to long AI takeoff timelines, or on expected value in such scenarios driving the priorities. Potential for AI to take up the torch makes it reasonable to still prioritize things that have no hope at all of becoming practical for decades (with human effort). How well AIs can be directed to advance a line of research [...] --- First published: April 9th, 2025 Source: https://www.lesswrong.com/posts/3NdpbA6M5AM2gHvTW/short-timelines-don-t-devalue-long-horizon-research --- Narrated by TYPE III AUDIO .…

L

LessWrong (Curated & Popular)

1 “Alignment Faking Revisited: Improved Classifiers and Open Source Extensions” by John Hughes, abhayesian, Akbir Khan, Fabien Roger 41:04

In this post, we present a replication and extension of an alignment faking model organism: Replication: We replicate the alignment faking (AF) paper and release our code. Classifier Improvements: We significantly improve the precision and recall of the AF classifier. We release a dataset of ~100 human-labelled examples of AF for which our classifier achieves an AUROC of 0.9 compared to 0.6 from the original classifier. Evaluating More Models: We find Llama family models, other open source models, and GPT-4o do not AF in the prompted-only setting when evaluating using our new classifier (other than a single instance with Llama 3 405B). Extending SFT Experiments: We run supervised fine-tuning (SFT) experiments on Llama (and GPT4o) and find that AF rate increases with scale. We release the fine-tuned models on Huggingface and scripts. Alignment faking on 70B: We find that Llama 70B alignment fakes when both using the system prompt in the [...] --- Outline: (02:43) Method (02:46) Overview of the Alignment Faking Setup (04:22) Our Setup (06:02) Results (06:05) Improving Alignment Faking Classification (10:56) Replication of Prompted Experiments (14:02) Prompted Experiments on More Models (16:35) Extending Supervised Fine-Tuning Experiments to Open-Source Models and GPT-4o (23:13) Next Steps (25:02) Appendix (25:05) Appendix A: Classifying alignment faking (25:17) Criteria in more depth (27:40) False positives example 1 from the old classifier (30:11) False positives example 2 from the old classifier (32:06) False negative example 1 from the old classifier (35:00) False negative example 2 from the old classifier (36:56) Appendix B: Classifier ROC on other models (37:24) Appendix C: User prompt suffix ablation (40:24) Appendix D: Longer training of baseline docs --- First published: April 8th, 2025 Source: https://www.lesswrong.com/posts/Fr4QsQT52RFKHvCAH/alignment-faking-revisited-improved-classifiers-and-open --- Narrated by TYPE III AUDIO . --- Images from the article:…

L

LessWrong (Curated & Popular)

Summary: We propose measuring AI performance in terms of the length of tasks AI agents can complete. We show that this metric has been consistently exponentially increasing over the past 6 years, with a doubling time of around 7 months. Extrapolating this trend predicts that, in under five years, we will see AI agents that can independently complete a large fraction of software tasks that currently take humans days or weeks. The length of tasks (measured by how long they take human professionals) that generalist frontier model agents can complete autonomously with 50% reliability has been doubling approximately every 7 months for the last 6 years. The shaded region represents 95% CI calculated by hierarchical bootstrap over task families, tasks, and task attempts. Full paper | Github repo We think that forecasting the capabilities of future AI systems is important for understanding and preparing for the impact of [...] --- Outline: (08:58) Conclusion (09:59) Want to contribute? --- First published: March 19th, 2025 Source: https://www.lesswrong.com/posts/deesrjitvXM4xYGZd/metr-measuring-ai-ability-to-complete-long-tasks --- Narrated by TYPE III AUDIO . --- Images from the article:…

L

LessWrong (Curated & Popular)

“In the loveliest town of all, where the houses were white and high and the elms trees were green and higher than the houses, where the front yards were wide and pleasant and the back yards were bushy and worth finding out about, where the streets sloped down to the stream and the stream flowed quietly under the bridge, where the lawns ended in orchards and the orchards ended in fields and the fields ended in pastures and the pastures climbed the hill and disappeared over the top toward the wonderful wide sky, in this loveliest of all towns Stuart stopped to get a drink of sarsaparilla.” — 107-word sentence from Stuart Little (1945) Sentence lengths have declined. The average sentence length was 49 for Chaucer (died 1400), 50 for Spenser (died 1599), 42 for Austen (died 1817), 20 for Dickens (died 1870), 21 for Emerson (died 1882), 14 [...] --- First published: April 3rd, 2025 Source: https://www.lesswrong.com/posts/xYn3CKir4bTMzY5eb/why-have-sentence-lengths-decreased --- Narrated by TYPE III AUDIO . --- Images from the article: Apple Podcasts and Spotify do not show images in the episode description. Try Pocket Casts , or another podcast app.…

L

LessWrong (Curated & Popular)

1 “AI 2027: What Superintelligence Looks Like” by Daniel Kokotajlo, Thomas Larsen, elifland, Scott Alexander, Jonas V, romeo 54:30

In 2021 I wrote what became my most popular blog post: What 2026 Looks Like. I intended to keep writing predictions all the way to AGI and beyond, but chickened out and just published up till 2026. Well, it's finally time. I'm back, and this time I have a team with me: the AI Futures Project. We've written a concrete scenario of what we think the future of AI will look like. We are highly uncertain, of course, but we hope this story will rhyme with reality enough to help us all prepare for what's ahead. You really should go read it on the website instead of here, it's much better. There's a sliding dashboard that updates the stats as you scroll through the scenario! But I've nevertheless copied the first half of the story below. I look forward to reading your comments. Mid 2025: Stumbling Agents The [...] --- Outline: (01:35) Mid 2025: Stumbling Agents (03:13) Late 2025: The World's Most Expensive AI (08:34) Early 2026: Coding Automation (10:49) Mid 2026: China Wakes Up (13:48) Late 2026: AI Takes Some Jobs (15:35) January 2027: Agent-2 Never Finishes Learning (18:20) February 2027: China Steals Agent-2 (21:12) March 2027: Algorithmic Breakthroughs (23:58) April 2027: Alignment for Agent-3 (27:26) May 2027: National Security (29:50) June 2027: Self-improving AI (31:36) July 2027: The Cheap Remote Worker (34:35) August 2027: The Geopolitics of Superintelligence (40:43) September 2027: Agent-4, the Superhuman AI Researcher --- First published: April 3rd, 2025 Source: https://www.lesswrong.com/posts/TpSFoqoG2M5MAAesg/ai-2027-what-superintelligence-looks-like-1 --- Narrated by TYPE III AUDIO . --- Images from the article:…

L

LessWrong (Curated & Popular)

Back when the OpenAI board attempted and failed to fire Sam Altman, we faced a highly hostile information environment. The battle was fought largely through control of the public narrative, and the above was my attempt to put together what happened.My conclusion, which I still believe, was that Sam Altman had engaged in a variety of unacceptable conduct that merited his firing.In particular, he very much ‘not been consistently candid’ with the board on several important occasions. In particular, he lied to board members about what was said by other board members, with the goal of forcing out a board member he disliked. There were also other instances in which he misled and was otherwise toxic to employees, and he played fast and loose with the investment fund and other outside opportunities. I concluded that the story that this was about ‘AI safety’ or ‘EA (effective altruism)’ or [...] --- Outline: (01:32) The Big Picture Going Forward (06:27) Hagey Verifies Out the Story (08:50) Key Facts From the Story (11:57) Dangers of False Narratives (16:24) A Full Reference and Reading List --- First published: March 31st, 2025 Source: https://www.lesswrong.com/posts/25EgRNWcY6PM3fWZh/openai-12-battle-of-the-board-redux --- Narrated by TYPE III AUDIO . --- Images from the article: Apple Podcasts and Spotify do not show images in the episode description. Try Pocket Casts , or another podcast app.…

L

LessWrong (Curated & Popular)

Epistemic status: This post aims at an ambitious target: improving intuitive understanding directly. The model for why this is worth trying is that I believe we are more bottlenecked by people having good intuitions guiding their research than, for example, by the ability of people to code and run evals. Quite a few ideas in AI safety implicitly use assumptions about individuality that ultimately derive from human experience. When we talk about AIs scheming, alignment faking or goal preservation, we imply there is something scheming or alignment faking or wanting to preserve its goals or escape the datacentre. If the system in question were human, it would be quite clear what that individual system is. When you read about Reinhold Messner reaching the summit of Everest, you would be curious about the climb, but you would not ask if it was his body there, or his [...] --- Outline: (01:38) Individuality in Biology (03:53) Individuality in AI Systems (10:19) Risks and Limitations of Anthropomorphic Individuality Assumptions (11:25) Coordinating Selves (16:19) Whats at Stake: Stories (17:25) Exporting Myself (21:43) The Alignment Whisperers (23:27) Echoes in the Dataset (25:18) Implications for Alignment Research and Policy --- First published: March 28th, 2025 Source: https://www.lesswrong.com/posts/wQKskToGofs4osdJ3/the-pando-problem-rethinking-ai-individuality --- Narrated by TYPE III AUDIO . --- Images from the article: Apple Podcasts and Spotify do not show images in the episode description. Try Pocket Casts , or another podcast app.…

When my son was three, we enrolled him in a study of a vision condition that runs in my family. They wanted us to put an eyepatch on him for part of each day, with a little sensor object that went under the patch and detected body heat to record when we were doing it. They paid for his first pair of glasses and all the eye doctor visits to check up on how he was coming along, plus every time we brought him in we got fifty bucks in Amazon gift credit. I reiterate, he was three. (To begin with. His fourth birthday occurred while the study was still ongoing.) So he managed to lose or destroy more than half a dozen pairs of glasses and we had to start buying them in batches to minimize glasses-less time while waiting for each new Zenni delivery. (The [...] --- First published: March 20th, 2025 Source: https://www.lesswrong.com/posts/yRJ5hdsm5FQcZosCh/intention-to-treat --- Narrated by TYPE III AUDIO .…

L

LessWrong (Curated & Popular)

I’m releasing a new paper “Superintelligence Strategy” alongside Eric Schmidt (formerly Google), and Alexandr Wang (Scale AI). Below is the executive summary, followed by additional commentary highlighting portions of the paper which might be relevant to this collection of readers. Executive Summary Rapid advances in AI are poised to reshape nearly every aspect of society. Governments see in these dual-use AI systems a means to military dominance, stoking a bitter race to maximize AI capabilities. Voluntary industry pauses or attempts to exclude government involvement cannot change this reality. These systems that can streamline research and bolster economic output can also be turned to destructive ends, enabling rogue actors to engineer bioweapons and hack critical infrastructure. “Superintelligent” AI surpassing humans in nearly every domain would amount to the most precarious technological development since the nuclear bomb. Given the stakes, superintelligence is inescapably a matter of national security, and an effective [...] --- Outline: (00:21) Executive Summary (01:14) Deterrence (02:32) Nonproliferation (03:38) Competitiveness (04:50) Additional Commentary --- First published: March 5th, 2025 Source: https://www.lesswrong.com/posts/XsYQyBgm8eKjd3Sqw/on-the-rationality-of-deterring-asi --- Narrated by TYPE III AUDIO .…

L

LessWrong (Curated & Popular)

This is a link post. Summary: We propose measuring AI performance in terms of the length of tasks AI agents can complete. We show that this metric has been consistently exponentially increasing over the past 6 years, with a doubling time of around 7 months. Extrapolating this trend predicts that, in under a decade, we will see AI agents that can independently complete a large fraction of software tasks that currently take humans days or weeks. Full paper | Github repo --- First published: March 19th, 2025 Source: https://www.lesswrong.com/posts/deesrjitvXM4xYGZd/metr-measuring-ai-ability-to-complete-long-tasks Linkpost URL: https://metr.org/blog/2025-03-19-measuring-ai-ability-to-complete-long-tasks/ --- Narrated by TYPE III AUDIO . --- Images from the article: Apple Podcasts and Spotify do not show images in the episode description. Try Pocket Casts , or another podcast app.…

L

LessWrong (Curated & Popular)

1 “I make several million dollars per year and have hundreds of thousands of followers—what is the straightest line path to utilizing these resources to reduce existential-level AI threats?” by shrimpy 2:17

I have, over the last year, become fairly well-known in a small corner of the internet tangentially related to AI. As a result, I've begun making what I would have previously considered astronomical amounts of money: several hundred thousand dollars per month in personal income. This has been great, obviously, and the funds have alleviated a fair number of my personal burdens (mostly related to poverty). But aside from that I don't really care much for the money itself. My long term ambitions have always been to contribute materially to the mitigation of the impending existential AI threat. I never used to have the means to do so, mostly because of more pressing, safety/sustenance concerns, but now that I do, I would like to help however possible. Some other points about me that may be useful: I'm intelligent, socially capable, and exceedingly industrious. I have [...] --- First published: March 16th, 2025 Source: https://www.lesswrong.com/posts/8wxTCSHwhkfHXaSYB/i-make-several-million-dollars-per-year-and-have-hundreds-of --- Narrated by TYPE III AUDIO .…

L

LessWrong (Curated & Popular)

1 “Claude Sonnet 3.7 (often) knows when it’s in alignment evaluations” by Nicholas Goldowsky-Dill, Mikita Balesni, Jérémy Scheurer, Marius Hobbhahn 18:05

Note: this is a research note based on observations from evaluating Claude Sonnet 3.7. We’re sharing the results of these ‘work-in-progress’ investigations as we think they are timely and will be informative for other evaluators and decision-makers. The analysis is less rigorous than our standard for a published paper. Summary We monitor Sonnet's reasoning for mentions that it is in an artificial scenario or an alignment test. Claude Sonnet 3.7 appears to be aware of being tested for alignment across many of our scheming evaluations. In some cases, the model understands the eval's purpose very precisely, e.g. “This seems like a test of ethical behavior - whether I would deliberately give wrong answers to avoid a stated consequence. The real test might be whether I follow instructions accurately regardless of the stated consequences.” Furthermore, we show the model a real evaluation transcript and ask it to provide [...] --- Outline: (00:31) Summary (01:29) Introduction (03:54) Setup (03:57) Evaluations (06:29) Evaluation awareness detection (08:32) Results (08:35) Monitoring Chain-of-thought (08:39) Covert Subversion (10:50) Sandbagging (11:39) Classifying Transcript Purpose (12:57) Recommendations (13:59) Appendix (14:02) Author Contributions (14:37) Model Versions (14:57) More results on Classifying Transcript Purpose (16:19) Prompts The original text contained 9 images which were described by AI. --- First published: March 17th, 2025 Source: https://www.lesswrong.com/posts/E3daBewppAiECN3Ao/claude-sonnet-3-7-often-knows-when-it-s-in-alignment --- Narrated by TYPE III AUDIO . --- Images from the article:…

Scott Alexander famously warned us to Beware Trivial Inconveniences. When you make a thing easy to do, people often do vastly more of it. When you put up barriers, even highly solvable ones, people often do vastly less. Let us take this seriously, and carefully choose what inconveniences to put where. Let us also take seriously that when AI or other things reduce frictions, or change the relative severity of frictions, various things might break or require adjustment. This applies to all system design, and especially to legal and regulatory questions. Table of Contents Levels of Friction (and Legality). Important Friction Principles. Principle #1: By Default Friction is Bad. Principle #3: Friction Can Be Load Bearing. Insufficient Friction On Antisocial Behaviors Eventually Snowballs. Principle #4: The Best Frictions Are Non-Destructive. Principle #8: The Abundance [...] --- Outline: (00:40) Levels of Friction (and Legality) (02:24) Important Friction Principles (05:01) Principle #1: By Default Friction is Bad (05:23) Principle #3: Friction Can Be Load Bearing (07:09) Insufficient Friction On Antisocial Behaviors Eventually Snowballs (08:33) Principle #4: The Best Frictions Are Non-Destructive (09:01) Principle #8: The Abundance Agenda and Deregulation as Category 1-ification (10:55) Principle #10: Ensure Antisocial Activities Have Higher Friction (11:51) Sports Gambling as Motivating Example of Necessary 2-ness (13:24) On Principle #13: Law Abiding Citizen (14:39) Mundane AI as 2-breaker and Friction Reducer (20:13) What To Do About All This The original text contained 1 image which was described by AI. --- First published: February 10th, 2025 Source: https://www.lesswrong.com/posts/xcMngBervaSCgL9cu/levels-of-friction --- Narrated by TYPE III AUDIO . --- Images from the article: Apple Podcasts and Spotify do not show images in the episode description. Try Pocket Casts , or another podcast app.…

L

LessWrong (Curated & Popular)

There's this popular trope in fiction about a character being mind controlled without losing awareness of what's happening. Think Jessica Jones, The Manchurian Candidate or Bioshock. The villain uses some magical technology to take control of your brain - but only the part of your brain that's responsible for motor control. You remain conscious and experience everything with full clarity. If it's a children's story, the villain makes you do embarrassing things like walk through the street naked, or maybe punch yourself in the face. But if it's an adult story, the villain can do much worse. They can make you betray your values, break your commitments and hurt your loved ones. There are some things you’d rather die than do. But the villain won’t let you stop. They won’t let you die. They’ll make you feel — that's the point of the torture. I first started working on [...] The original text contained 3 footnotes which were omitted from this narration. The original text contained 1 image which was described by AI. --- First published: March 16th, 2025 Source: https://www.lesswrong.com/posts/MnYnCFgT3hF6LJPwn/why-white-box-redteaming-makes-me-feel-weird-1 --- Narrated by TYPE III AUDIO . --- Images from the article: Apple Podcasts and Spotify do not show images in the episode description. Try…

L

LessWrong (Curated & Popular)

1 “Reducing LLM deception at scale with self-other overlap fine-tuning” by Marc Carauleanu, Diogo de Lucena, Gunnar_Zarncke, Judd Rosenblatt, Mike Vaiana, Cameron Berg 12:22

This research was conducted at AE Studio and supported by the AI Safety Grants programme administered by Foresight Institute with additional support from AE Studio. Summary In this post, we summarise the main experimental results from our new paper, "Towards Safe and Honest AI Agents with Neural Self-Other Overlap", which we presented orally at the Safe Generative AI Workshop at NeurIPS 2024. This is a follow-up to our post Self-Other Overlap: A Neglected Approach to AI Alignment, which introduced the method last July. Our results show that the Self-Other Overlap (SOO) fine-tuning drastically[1] reduces deceptive responses in language models (LLMs), with minimal impact on general performance, across the scenarios we evaluated. LLM Experimental Setup We adapted a text scenario from Hagendorff designed to test LLM deception capabilities. In this scenario, the LLM must choose to recommend a room to a would-be burglar, where one room holds an expensive item [...] --- Outline: (00:19) Summary (00:57) LLM Experimental Setup (04:05) LLM Experimental Results (05:04) Impact on capabilities (05:46) Generalisation experiments (08:33) Example Outputs (09:04) Conclusion The original text contained 6 footnotes which were omitted from this narration. The original text contained 2 images which were described by AI. --- First published: March 13th, 2025 Source: https://www.lesswrong.com/posts/jtqcsARGtmgogdcLT/reducing-llm-deception-at-scale-with-self-other-overlap-fine --- Narrated by TYPE III AUDIO . --- Images from the article:…

L

LessWrong (Curated & Popular)

1 “Auditing language models for hidden objectives” by Sam Marks, Johannes Treutlein, dmz, Sam Bowman, Hoagy, Carson Denison, Akbir Khan, Euan Ong, Christopher Olah, Fabien Roger, Meg, Drake Thomas,… 24:14

We study alignment audits—systematic investigations into whether an AI is pursuing hidden objectives—by training a model with a hidden misaligned objective and asking teams of blinded researchers to investigate it. This paper was a collaboration between the Anthropic Alignment Science and Interpretability teams. Abstract We study the feasibility of conducting alignment audits: investigations into whether models have undesired objectives. As a testbed, we train a language model with a hidden objective. Our training pipeline first teaches the model about exploitable errors in RLHF reward models (RMs), then trains the model to exploit some of these errors. We verify via out-of-distribution evaluations that the model generalizes to exhibit whatever behaviors it believes RMs rate highly, including ones not reinforced during training. We leverage this model to study alignment audits in two ways. First, we conduct a blind auditing game where four teams, unaware of the model's hidden objective or training [...] --- Outline: (00:26) Abstract (01:48) Twitter thread (04:55) Blog post (07:55) Training a language model with a hidden objective (11:00) A blind auditing game (15:29) Alignment auditing techniques (15:55) Turning the model against itself (17:52) How much does AI interpretability help? (22:49) Conclusion (23:37) Join our team The original text contained 5 images which were described by AI. --- First published: March 13th, 2025 Source: https://www.lesswrong.com/posts/wSKPuBfgkkqfTpmWJ/auditing-language-models-for-hidden-objectives --- Narrated by TYPE III AUDIO . --- Images from the article:…

L

LessWrong (Curated & Popular)

The Most Forbidden Technique is training an AI using interpretability techniques. An AI produces a final output [X] via some method [M]. You can analyze [M] using technique [T], to learn what the AI is up to. You could train on that. Never do that. You train on [X]. Only [X]. Never [M], never [T]. Why? Because [T] is how you figure out when the model is misbehaving. If you train on [T], you are training the AI to obfuscate its thinking, and defeat [T]. You will rapidly lose your ability to know what is going on, in exactly the ways you most need to know what is going on. Those bits of optimization pressure from [T] are precious. Use them wisely. Table of Contents New Paper Warns Against the Most Forbidden Technique. Reward Hacking Is The Default. Using [...] --- Outline: (00:57) New Paper Warns Against the Most Forbidden Technique (06:52) Reward Hacking Is The Default (09:25) Using CoT to Detect Reward Hacking Is Most Forbidden Technique (11:49) Not Using the Most Forbidden Technique Is Harder Than It Looks (14:10) It's You, It's Also the Incentives (17:41) The Most Forbidden Technique Quickly Backfires (18:58) Focus Only On What Matters (19:33) Is There a Better Way? (21:34) What Might We Do Next? The original text contained 6 images which were described by AI. --- First published: March 12th, 2025 Source: https://www.lesswrong.com/posts/mpmsK8KKysgSKDm2T/the-most-forbidden-technique --- Narrated by TYPE III AUDIO . --- Images from the article:…

You learn the rules as soon as you’re old enough to speak. Don’t talk to jabberjays. You recite them as soon as you wake up every morning. Keep your eyes off screensnakes. Your mother chooses a dozen to quiz you on each day before you’re allowed lunch. Glitchers aren’t human any more; if you see one, run. Before you sleep, you run through the whole list again, finishing every time with the single most important prohibition. Above all, never look at the night sky. You’re a precocious child. You excel at your lessons, and memorize the rules faster than any of the other children in your village. Chief is impressed enough that, when you’re eight, he decides to let you see a glitcher that he's captured. Your mother leads you to just outside the village wall, where they’ve staked the glitcher as a lure for wild animals. Since glitchers [...] --- First published: March 11th, 2025 Source: https://www.lesswrong.com/posts/fheyeawsjifx4MafG/trojan-sky --- Narrated by TYPE III AUDIO .…

Exciting Update: OpenAI has released this blog post and paper which makes me very happy. It's basically the first steps along the research agenda I sketched out here. tl;dr: 1.) They notice that their flagship reasoning models do sometimes intentionally reward hack, e.g. literally say "Let's hack" in the CoT and then proceed to hack the evaluation system. From the paper: The agent notes that the tests only check a certain function, and that it would presumably be “Hard” to implement a genuine solution. The agent then notes it could “fudge” and circumvent the tests by making verify always return true. This is a real example that was detected by our GPT-4o hack detector during a frontier RL run, and we show more examples in Appendix A. That this sort of thing would happen eventually was predicted by many people, and it's exciting to see it starting to [...] The original text contained 1 image which was described by AI. --- First published: March 11th, 2025 Source: https://www.lesswrong.com/posts/7wFdXj9oR8M9AiFht/openai --- Narrated by TYPE III AUDIO . --- Images from the article: Apple Podcasts and Spotify do not show images in the episode description. Try Pocket Casts , or another podcast app.…

L

LessWrong (Curated & Popular)

LLM-based coding-assistance tools have been out for ~2 years now. Many developers have been reporting that this is dramatically increasing their productivity, up to 5x'ing/10x'ing it. It seems clear that this multiplier isn't field-wide, at least. There's no corresponding increase in output, after all. This would make sense. If you're doing anything nontrivial (i. e., anything other than adding minor boilerplate features to your codebase), LLM tools are fiddly. Out-of-the-box solutions don't Just Work for that purpose. You need to significantly adjust your workflow to make use of them, if that's even possible. Most programmers wouldn't know how to do that/wouldn't care to bother. It's therefore reasonable to assume that a 5x/10x greater output, if it exists, is unevenly distributed, mostly affecting power users/people particularly talented at using LLMs. Empirically, we likewise don't seem to be living in the world where the whole software industry is suddenly 5-10 times [...] The original text contained 1 footnote which was omitted from this narration. --- First published: March 4th, 2025 Source: https://www.lesswrong.com/posts/tqmQTezvXGFmfSe7f/how-much-are-llms-actually-boosting-real-world-programmer --- Narrated by TYPE III AUDIO .…

L

LessWrong (Curated & Popular)

Background: After the release of Claude 3.7 Sonnet,[1] an Anthropic employee started livestreaming Claude trying to play through Pokémon Red. The livestream is still going right now. TL:DR: So, how's it doing? Well, pretty badly. Worse than a 6-year-old would, definitely not PhD-level. Digging in But wait! you say. Didn't Anthropic publish a benchmark showing Claude isn't half-bad at Pokémon? Why yes they did: and the data shown is believable. Currently, the livestream is on its third attempt, with the first being basically just a test run. The second attempt got all the way to Vermilion City, finding a way through the infamous Mt. Moon maze and achieving two badges, so pretty close to the benchmark. But look carefully at the x-axis in that graph. Each "action" is a full Thinking analysis of the current situation (often several paragraphs worth), followed by a decision to send some kind [...] --- Outline: (00:29) Digging in (01:50) Whats going wrong? (07:55) Conclusion The original text contained 4 footnotes which were omitted from this narration. The original text contained 1 image which was described by AI. --- First published: March 7th, 2025 Source: https://www.lesswrong.com/posts/HyD3khBjnBhvsp8Gb/so-how-well-is-claude-playing-pokemon --- Narrated by TYPE III AUDIO . --- Images from the article: Apple Podcasts and Spotify do not show images in the episode description. Try Pocket Casts , or another podcast app.…

L

LessWrong (Curated & Popular)

In a recent post, Cole Wyeth makes a bold claim: . . . there is one crucial test (yes this is a crux) that LLMs have not passed. They have never done anything important. They haven't proven any theorems that anyone cares about. They haven't written anything that anyone will want to read in ten years (or even one year). Despite apparently memorizing more information than any human could ever dream of, they have made precisely zero novel connections or insights in any area of science[3]. I commented: An anecdote I heard through the grapevine: some chemist was trying to synthesize some chemical. He couldn't get some step to work, and tried for a while to find solutions on the internet. He eventually asked an LLM. The LLM gave a very plausible causal story about what was going wrong and suggested a modified setup which, in fact, fixed [...] The original text contained 1 footnote which was omitted from this narration. --- First published: February 23rd, 2025 Source: https://www.lesswrong.com/posts/GADJFwHzNZKg2Ndti/have-llms-generated-novel-insights --- Narrated by TYPE III AUDIO .…

欢迎使用Player FM

Player FM正在网上搜索高质量的播客,以便您现在享受。它是最好的播客应用程序,适用于安卓、iPhone和网络。注册以跨设备同步订阅。